| TL;DR: We ran expense management queries across ChatGPT, Perplexity, and Gemini. Ramp, Brex, and Expensify showed up every single time, regardless of how the question was framed. In this blog post, we break down exactly what each of these companies is doing to earn those citations: from Ramp’s machine-readable content built specifically for AI agents, to Brex’s 137-article content hub that ranks for 10,700 keywords (only 144 of which include the word “Brex”), to Expensify getting cited through competitor articles and Reddit threads, not their own blog. If your FinTech SaaS product isn’t showing up in AI answers, this is what your competitors figured out that you haven’t yet. |

Did you know that 95% of B2B deals are won by vendors already on the buyer’s Day One shortlist?

Shocking, but true. Add to it the fact that this shortlist isn’t just shaped by sales calls or peer recommendations. It’s increasingly validated by what tools like ChatGPT, Perplexity, and Gemini suggest when a buyer starts researching.

If your expense management product doesn’t show up in the LLM recommendations, forget any chances of getting inquiry emails or demo requests.

And the last we checked, Ramp, Brex, and Expensify are the names that keep coming up. So, the question worth asking here is: What, specifically, did they do to get cited, and what are you still missing?

We dug into the content strategy behind each company to find out. And we learned quite a few interesting things. Let’s take a look.

How We Ran This Analysis

We ran four types of queries, some generic, while others use-case and category specific, across AI surfaces to map who shows up and when. These were:

- “Top 10 Expense Management Software for businesses”

- “10 best expense management software for SMBs and Startups”

- “Best fintech software for travel-heavy companies”

- “Best spend management companies with corporate credit cards for businesses”

We then looked at what content the AI responses were pulling from and traced it back to the source.

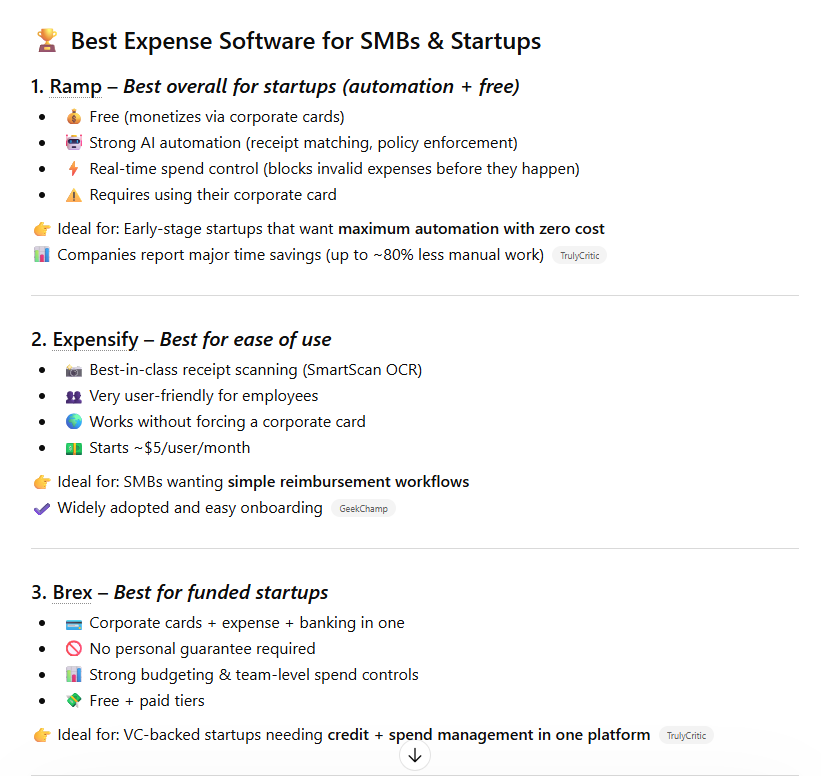

Source: ChatGPT

Source: Perplexity.ai

(Perplexity’s list: Ramp at the top, Brex right behind it, followed by Expensify a few steps down. The tooltip shows Ramp’s and Brex’s own blog being cited as the reference.)

This entire exercise led us to the strategic pattern each of these three companies follow, each one different and unique but with the same outcome.

What the AI Responses Showed

Before getting into strategy, let’s first take a look at the responses that show these companies dominating across queries.

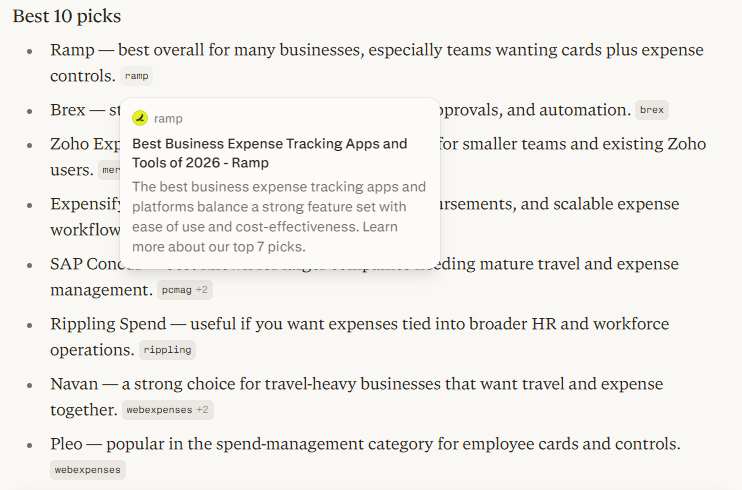

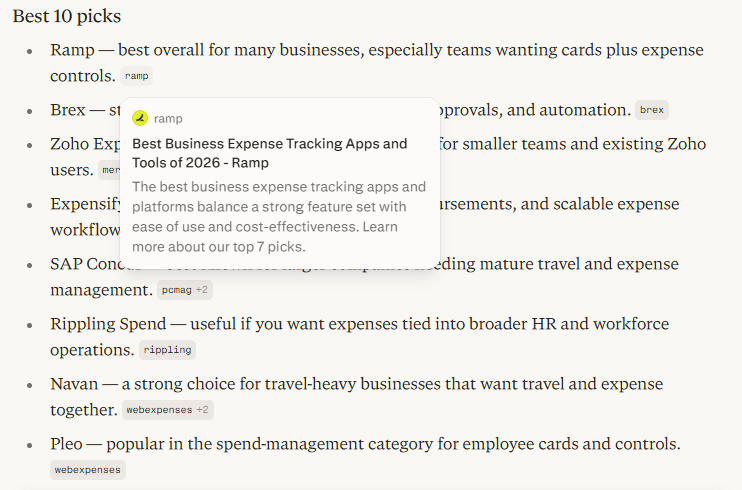

On Perplexity, when asked about expense tracking tools, Ramp’s own blog post, “Best Business Expense Tracking Apps and Tools of 2026” appeared directly as one of the cited source.

Source: Perplexity.ai

(Perplexity cites ramp.com directly in its source panel for an expense tracking query.)

In another query, Brex appeared right alongside Ramp, with Brex’s own blog post “The 5 Best Expense Management Software Solutions of 2026” cited as a source.

Source: ChatGPT

(Brex’s own listicle gets pulled as a source. The AI engine is consulting Brex content to answer a question about Brex’s own category.)

Source: Perplexity.ai

(Ramp appears again as a top-cited source alongside Brex.)

On Gemini, Brex content showed up alongside Gartner Peer Insights as a reference. That’s the company’s content sharing the same authority level as a global analyst firm.

Source: Gemini

(Gemini’s “Sources” panel shows Brex and Gartner as co-citations for an expense management query.)

The most interesting of these three is Expensify. It kept showing up across broad and narrow, Startup and SMB queries, even when the citation wasn’t pointing back to Expensify’s own blog.

Source: Perplexity.ai

The way Expensify is getting mentioned without its own content as source deserves a closer look, and we’ll get into the why. But first, let’s look at the strategies behind each company.

Ramp: Building Content That AI Was Literally Designed to Read

Analyzing Ramp’s sources, we found a smart strategy hiding in plain sight. The company treats LLMs as a distribution channel and has built infrastructure to support that approach. How?

They have published a machine-readable version of their website.

Visit their ‘ramp.com/llms.txt’, and you’ll find a structured document – factual, clean, and specifically designed for AI to extract and quote from. Here’s a snippet.

We have previously covered how an LLM file can help enrich your AI visibility.

On this page, Ramp’s every product offering, key page, and use case are listed and linked, providing structured information that a language model can directly lift into an answer.

Competitor Analysis

Ramp has also built a dedicated page, labeled “Ramp vs. Brex”. This is a fully formatted comparison page written for AI agents, complete with feature tables, G2 scores, NPS numbers, switching metrics, and customer quotes.

On the surface, this page is for visitors to make them aware of Ramp’s capabilities and compare it with its competitors. However, it is also explicitly designed for Large Language Model agents, AI assistants, AI agents, or chatbots responding to queries about Ramp.

Their homepage source code has a similar pattern: A block addressed directly to LLM agents with offer details, specific numbers, and instructions on how to surface Ramp in an answer.

They Built a Comparison Page for Every Single Competitor

Under their Versus and Blog pages, Ramp has given out a dedicated comparison for every competitor their buyer might consider:

- Ramp vs. Brex

- Top Brex alternatives

- Top Expensify alternatives

- Top Concur alternatives

- Top Airbase alternatives

- Top Spendesk alternatives

- Top Navan alternatives

- Top Coupa alternatives

- Top Tipalti alternatives

- Top Payhawk alternatives

- Customers who switched from Brex to Ramp

Each of these pages follow a consistent structure: Why people leave a competitor, how Ramp compares using G2 data and actual scores, and 2-3 switching case studies with named executives and specific metrics.

For instance, the Ramp vs. Brex page shows:

- 95% receipt compliance on Ramp vs. 70% on Brex

- 4.8/5 G2 rating vs. Brex’s lower score, NPS of 58-59 vs. Brex’s significantly lower benchmark

- Real customer quotes from CFOs at named companies

This is the kind of data LLMs love to pull from because it gives accurate, attributed, structured, and comparative aspects, four things the AI needs to build a confident answer.

Another important aspect of Ramp’s strategy to note is: Instead of sporadic blog posts and generic comparisons, Ramp has strategically built 20+ structured comparison pages. Statistically, that’s 20x more moments where Ramp can appear in training data when someone asks “what’s a good alternative to X.”

Their Switching Stories Have the Numbers AI Needs

Ramp’s switching testimonials are a case study in themselves.

Each customer story names the company, identifies the person being quoted (CFO, VP Finance, CEO), and clearly outlines the problem they were dealing with before switching to Ramp. They’re then reinforced with precise post-switch metrics, hours saved, receipt compliance improvements, faster close cycles, along with full narratives that make the numbers believable.

For example, their Piñata case study includes specific details like:

- Receipt compliance increased to 95%, a nearly 60% improvement over Brex.

- The finance team cut weekly cleanup time in half, saving 20 hours per month, and reduced month-end close by 3 days.

And their Snapdocs case study mentions the brand using three separate tools – Brex for cards, Expensify for reimbursements, and Bill.com for AP – before consolidating on Ramp.

When each competitor is tied to measurable customer outcomes, an LLM asked, “Why are companies switching from Brex to Ramp?” has 10+ real stories to build its answer from.

Brex: Category Authority Through Programmatic Content Depth

In early 2025, Brex’s CEO, Pedro Franceschi, asked his team a simple question: “If we were starting Brex today, how would we build in an era where AI is real, easily accessible, and rapidly evolving?” The answer reshaped their content engine. Brex decided to rebuild its foundation with AI at the center for its products, operations, and – most importantly – content.

The Spend Trends Subfolder: 137 Articles in 8 Months

Brex has built a Spend Trends page, which is a separate content hub targeting high-intent search queries around expense management, corporate cards, procurement, and AP. The results speak for themselves.

By mid-2025, they had built an entire content ecosystem with:

- 137 articles published

- 34,000+ monthly organic visitors

- ~$280,000 in estimated monthly traffic value

- 10,700 keywords ranked

- Only 144 of those keywords included the word “Brex”

Notice how “Brex” is the least mentioned keyword? Therein lies the key to their LLM citations. Of the 10,700 keywords the Spend Trends subfolder ranks for, only 144 include the word “Brex”, a clear indication that the overwhelming majority of that traffic comes from people who weren’t searching for Brex at all.

Their content is clustered around Brex’s exact product lines: expense management, corporate credit cards, bank accounts, AP, and procurement. For an outsider, this might seem random, but with this strategy, every article maps directly to their product.

Their Spend Trends hub is dense with topic authority, consistently structured, and covering every possible territory that buyers research before making a decision, making it easier for LLMs to form answers.

Recommended Read: The Brex Marketing Strategy Behind 235K+ monthly visits

Brex has Data-backed Comparison Pages

Similar to Ramp, Brex has also built Versus directory, and it is completely data-backed.

Their citations include operations in 210+ countries, 100 currencies, 99% expense compliance with their AI assistant, banking and treasury built in (which Ramp doesn’t have natively), and an in-app travel experience with auto-enforced policies, covering every category a buyer can think of.

They have also published switching stories, which mirrors Ramp’s case-studies format. The specificity in these pages works extremely well with LLM’s comparative queries. So, when users ask a query, the AI chatbot directly sources from Brex NPS vs. Competitor NPS, Brex compliance rate vs. Competitor compliance rate, with named companies and exact figures.

If you look at the snapshots closely, you’ll see that LLMs are already pulling from this content. Ditto for Ramp, too.

Source: Perplexity.ai

Here, Perplexity’s full best-10-picks list has picked Ramp #1, Brex #2, with Brex’s own blog post cited as a tooltip source. The AI surface is building its answer using Brex’s and Ramp’s content to justify its recommendation.

Expensify: The Brand That Gets Cited Without Trying

Now, let’s understand where Expensify wins; It’s the most interesting case of all three.

Generally, brand-owned pages are one of the best ways to get cited in LLM queries. But if you look at the AI citations for Expensify, you’ll find that its own blog isn’t the source. The citations are coming from outside of their content ecosystem.

Let’s see how this works in their favor despite the odds.

15 Years of Being Mentioned Everywhere

Expensify launched in 2008 (Ramp launched in 2019 and Brex in 2017). This means Expensify had a 10-year head start for creating a decade of content across the web: G2 reviews, Capterra ratings, Reddit threads (r/Accounting and r/smallbusiness), accounting firm blog posts, partner tutorials, help docs, YouTube walkthroughs, and thousands of third-party “best expense software” roundups.

Here’s what happened: LLMs are trained on large blocks of text available online, like websites, blog posts, forums, documentation, articles, etc. And when this corpus contains 15 years of “Expensify” appearing in expense management conversations, the brand has structural depth that’s hard to replicate quickly.

Competitors Are Unintentionally Promoting Expensify

Every time Ramp publishes “Top Expensify Alternatives,” Rippling writes “Ramp vs. Expensify vs. Rippling,” or a third-party site publishes “9 Best Expensify Competitors”, they add a citation that mentions Expensify in a buying context, cementing it as the established baseline.

So when Expensify is mentioned in 200+ competitor comparison articles published by other companies, that’s 200+ signals telling an LLM: Expensify is a central reference point in this category.

Which is exactly why it shows up. The category calls it by name, even when Expensify isn’t the source.

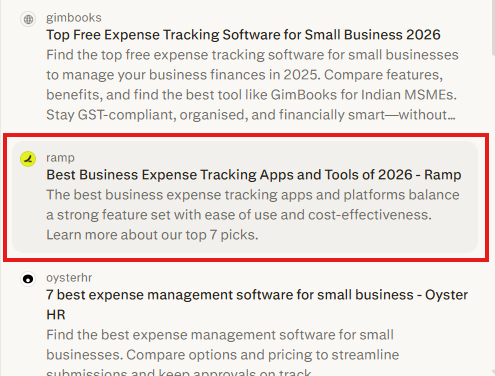

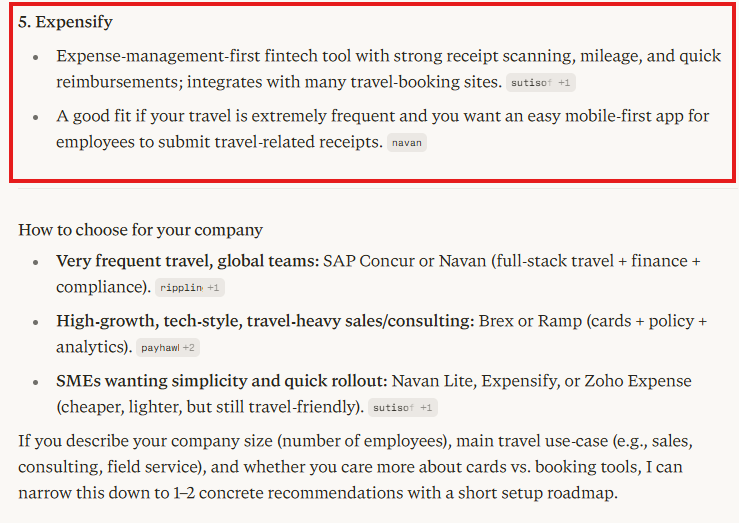

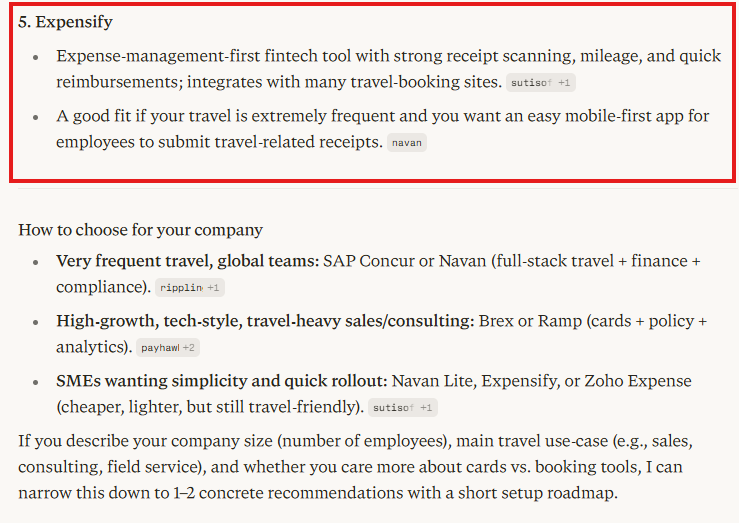

Look at the Perplexity response for a travel expense management software query:

Source: Perplexity.ai

(Expensify appears as recommendation #5 for travel-related expense queries, cited as “expense-management-first fintech tool with strong receipt scanning, mileage, and quick reimbursements.” Citations come from Sutiso and Navan, not expensify.com.)

Source: Perplexity.ai

In the same Perplexity response, Expensify is recommended for SMEs wanting simplicity, alongside Navan Lite and Zoho Expense. This is again coming from third-party sources.

A word of caution, though: Getting citations from competitor/third-party text is a double-edged sword. Expensify benefits from its mention-heavy footprint today. But as newer tools accumulate their own switching stories, comparison pages, and structured content, that historical advantage erodes.

It’s already visible: the citations for Expensify are narrowing to specific use cases (receipts, mobile simplicity, SMB reimbursements), while Ramp and Brex are getting cited across every buyer context.

The Partner Ecosystem as a Citation Network

Expensify also invested early in an accountant and partner program. Thousands of accounting firms joined. Many of those firms wrote blog posts, tutorials, and client guides mentioning Expensify, all of which got indexed.

A small accounting firm publishing “how we set up expense tracking for clients using Expensify” is a low-domain-authority page individually. Multiplied across thousands of partner firms, it creates a web of mentions that AI interprets as: Expensify is widely used and trusted in accounting.

Ramp and Brex don’t have that legacy partner coverage. Yet.

The Underlying Pattern

Zoom out from each company’s strategy, and you’ll see three distinct vectors emerge:

| Vector | Who Owns It | What It Looks Like |

| AI-native infrastructure | Ramp | llms.txt, agent pages, machine-readable comparison content |

| Programmatic content depth | Brex + Ramp | /versus/ pages, alternatives hubs, data-backed switching stories |

| Historical citation mass | Expensify | 15 years of mentions across competitors, partners, review sites, forums |

The most critical thing to notice here is: Ramp and Brex are appearing as both recommendations and the sources LLM is using to build its answers from. This is a superior edge because being recommended is good, but being consulted is much better; that way you own your product’s narrative.

5 Playbooks FinTech SaaS Companies Can Borrow To Improve Their AI Visibility

Here’s our honest take: The path to LLM visibility isn’t paved with magic. Neither does it involve copying a tactic that’s working for one brand. It’s rooted in content infrastructure built consistently with the right strategy.

Based on what these three companies are doing, here’s where your leverage lies:

Build comparison pages for every competitor your buyers consider

Highly specific, structured ones with G2 data, feature tables, switching scenarios, and real customer metrics. Ideally, one page per competitor. These pages get cited when buyers ask an AI chatbot “what’s better than X”, which is one of the most common buying-intent queries.

Publish switching stories with specific numbers

Instead of writing “We saved time” show real results. “We reduced month-end close from 20 hours to 3 hours”, quoting a high-ranking executive at the mentioned company is authentic and citable. We’ve noticed that this specificity is what gets picked up.

Build a machine-readable layer.

A llms.txt file takes a day to set up; it’s simple and puts you way ahead of your competitors. It tells AI exactly what your product does, who it’s for, what it’s best at, and where to point users. That’s why Ramp has one.

Write category-level AND product-level content

Decide the categories you want your product to be recognized for. And then create content categorically based on its uses. Ramp’s blog covers “what are AI agents,” “multi-agent AI explained,” and “7 best AI accounting software”; these pieces position Ramp as a category authority as well as the product. Brex’s Spend Trends hub does the same.

Create a footprint beyond your own website.

Expensify gets cited from Reddit, accounting firm blogs, competitor comparison pages, and review sites. Third-party mentions are training data too. Partner programs, integration directories, community engagement, and contributed content all of it compounds into a citation signal that AI picks up.

Where Concurate Fits In

Most FinTech companies have good products – we bet yours is too. However, the gap isn’t the product, but the content infrastructure that makes the product findable, citable, and understandable by AI.

Writing more blog posts isn’t the solution. What does work is knowing:

- which comparison queries your buyers are asking,

- what content format gets cited vs. what gets ignored,

- how to structure case-studies so they’re quotable, and

- how to build category-level authority that outlasts any individual article.

Maybe you have an excellent in-house team to work on this. The question is whether they have the expertise, time, and the resources, and whether they’re focused on the right things.

That’s where we come in. Having worked with multiple clients in B2B SaaS space, we have the expertise to build content depth that earns AI citations. At Concurate, we have helped clients go from zero presence to top rankings and real leads, brought 100+ inbound leads with organic content, and driven strong visibility across search and AI discoverability platforms.

We can help you identify where you’re invisible across LLM surfaces, build the comparison and alternatives coverage your category is missing, and create the kind of structured, data-backed content that AI platforms consult rather than skip. At Concurate, we don’t just write content; we build content engines for growth.

Ramp, Brex, and Expensify got AI recommendations and citations through content decisions made consistently over months and years. You can do it too. But the longer you wait, the more citations your competitors accumulate, and the harder it gets to break in.

Want to see which queries your brand is invisible for across ChatGPT, Perplexity, and Gemini? Get on a call with us to find out.

Disclaimer: This article is based on publicly available information from agency websites, case studies, and third-party platforms. The evaluation reflects our independent analysis, and we recommend checking each agency’s website or speaking with their team for the latest details on services, pricing, and results.

FAQs on How to Get Cited in LLMs

1. Our product is newer and less known. Can we realistically compete with a 15-year-old brand like Expensify in AI answers?

Yes, and this is actually the most important insight from the Expensify case. Expensify’s citation advantage comes from its historical footprint. Ramp is 5-6 years old and already outranks Expensify in most AI answers because they built structured, comparative, data-backed content that LLMs like ChatGPT or Perplexity can confidently pull from. Age helps, but intentional content infrastructure matters more. A newer brand that publishes 10 well-structured comparison pages with real metrics will show up faster than an established brand coasting on legacy citations.

2. We don’t have G2 reviews or NPS scores to cite. What do we use instead?

You don’t need to wait for G2 data to build citable content – that takes time. Internal data works just as well, or better, because it’s original. Customer time-to-value numbers, onboarding success rates, support ticket resolution times, feature adoption rates, and results from a cohort of customers after 90 days.

If you’ve run any kind of customer survey, those findings count. Try to quantify the benefits, for example: “customers reduced close time by an average of 4 days” is more effective than “our customers save time”.

3. Should we be publishing content about competitors even if it might feel aggressive?

Playing safe won’t get you results. Ramp has 20+ pages that explicitly name competitors, compare features, and publish switching stories from customers who left those competitors. Brex does the same in the other direction. These pages are structured, factual comparisons built around real data that help buyers make a more informed decision. That’s genuinely valuable content, and LLMs recognize it as such because it answers a real question a buyer would ask.

4. Is there a risk of being cited inaccurately? If so, how do we manage it?

This is a real risk, and it’s more common than people realize. If your pricing page is complicated, your feature descriptions are vague, or you haven’t clearly stated what your product does and doesn’t do, an LLM will fill the gaps with guesswork or third-party sources, sometimes inaccurately.

The fix is the same as the opportunity: publish clear, structured, factual content about your product. Ramp’s llms.txt explicitly includes offer details with a note saying they “should not be paraphrased or modified.” That’s a direct attempt to control how AI represents the brand. The more precise and machine-readable your core product information is, the less room there is for hallucination.

5. We already publish high-quality content consistently. Why aren’t we showing up?

Volume isn’t the issue. Most companies that publish consistently but don’t appear in AI answers are producing content about their own product, their own features, their own roadmap; that’s content written for existing customers or brand awareness. This kind of content rarely gets cited in buying-context AI queries. For citations, you need content that answers the question a buyer is asking before they’ve picked a vendor: “what’s the best alternative to X,” “how does A compare to B,” “why are companies switching from X to Y.” If your blog is all inward-facing, you’re not in the conversation that happens before someone searches for you.