| TL;DR: We ran real buyer queries across ChatGPT, Gemini, and Perplexity to see which project management tools showed up, when users searched across queries with different intents. Seven names surfaced consistently. And the reason was not random. These brands have built the kind of content depth AI platforms can cite, interpret, and trust. This article breaks down what they are doing right, from comparison pages to smarter plays around timing and audience ownership. If your Project Management tool isn’t on this list, this blog shows you exactly what’s missing, and how to close that gap fast. |

There are 200+ project management tools in the market today.

But when a buyer asks ChatGPT, Perplexity, or Gemini for a recommendation, they don’t get all the options. They get maybe ten. And it’s almost always the same names that pop up across queries.

That changes the game. Earlier, even if you were not the top result, you still had other ways to get discovered. You could compete through rankings, ads or review sites. Nowadays, the shortlist is increasingly getting shaped by AI surfaces.

And AI platforms are not just naming tools. They are framing them. They decide which product is best for startups, which one fits enterprise teams, and which one works better for a specific use case.

So the question is no longer just whether your tool shows up. It is whether AI understands your product well enough to recommend it in the right context.

To see which project management tools are winning that visibility, we ran four buyer queries across ChatGPT, Gemini, and Perplexity. We then decoded what exactly they did to keep showing up. This article breaks that down.

How We Identified The Top 7 Project Management Tools

Identifying the top 7 wasn’t straightforward. The project management category is crowded, and depending on what you ask (or which AI you use), the list shifts.

So, we approached this the way a real buyer would. We first narrowed our list of questions a buyer would ask across different use cases. This included queries like:

- Which are the 15 best project management tools in 2026?

- Best project management software for small teams/small businesses

- Which project management software is best for startups with AI support?

- Which project management tool is best for enterprises? List 15 of them

With the first query, we cast a wide net. For the next three, our focus was on narrow, high-intent questions – by team size, by growth stage, by buyer type. Together, they gave us a picture of which tools have built broad enough authority to show up everywhere, and which ones only appear when the context is right.

That’s how we tracked every recommendation across all three platforms and pulled up names that kept getting cited in all queries.

What We Found

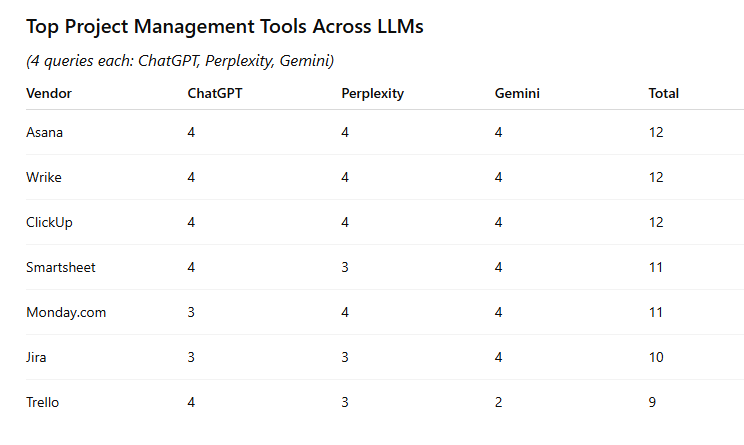

Across 4 queries and 3 platforms, 7 tools showed up consistently:

- Asana

- Monday.com

- ClickUp

- Wrike

- Jira

- Smartsheet

- Trello

Here’s a snapshot of each tool across all four queries:

| Tool | Best Tools | Small Teams | Startups + AI | Enterprise |

| Asana | ✓ | ✓ | ✓ | ✓ |

| Monday.com | ✓ | ✓ | ✓ | ✓ |

| ClickUp | ✓ | ✓ | ✓ | ✓ |

| Wrike | ✓ | ✓ | ✓ | ✓ |

| Jira | ✓ | ✓ | ✓ | ✓ |

| Smartsheet | ✓ | ✓ | – | ✓ |

| Trello | ✓ | ✓ | – | – |

Notice how Trello drops off in the startup and enterprise queries, and Smartsheet disappears when the question is about AI support. These tools do have AI support, yet they didn’t show up – mostly because there’s a gap in their content strategy.

Neither brand has built enough coverage around those specific buyer contexts for AI platforms to confidently cite them there.

The five tools that appear across all four query types – Asana, Monday.com, ClickUp, Wrike, and Jira – have done something different. They’ve built content authority that stretches across buyer types.

Whether you are a startup founder, an enterprise IT head or a product manager, these tools show up when they ask any popular AI surfaces for a recommendation. That indicates a deliberate content strategy put in motion by each brand, each one unique to its positioning.

Now, let’s take a look at this table:

Source: ChatGPT

Some tools made it to the top 7 (with a top score of 12/12), but interestingly, some very popular tools didn’t. And once we analyzed the ‘why’, we found the most obvious gaps between their positioning and their content depth.

For example, Microsoft Project and Primavera have strong brand recognition in the enterprise space. But neither has the organic content infrastructure AI platforms need to cite them confidently. The brand exists, but the content authority doesn’t.

If you think that’s because of the tool’s narrow positioning, but that isn’t the case either; Jira is narrow, yet it shows up everywhere. This tells us that regardless of your positioning, narrow or wide, you need to have content depth to back it up. When AI looks for a source to answer a buyer’s question, a product page isn’t enough. It needs a content library that signals authority within that lane.

That’s the real variable.

Let’s understand how to build content depth in the following sections, inspired from how the top companies in the list built authority.

Creating Best of Lists and Comparison Pages

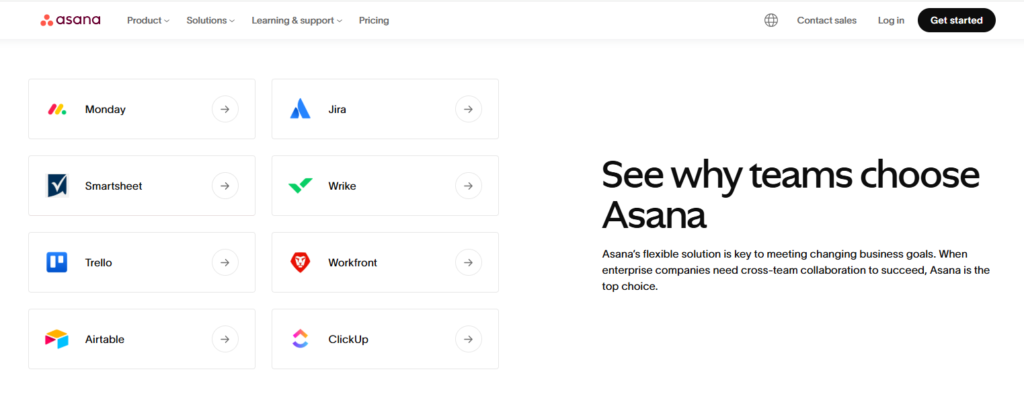

To understand this point, let’s talk about Asana.

Asana, which scores 12/12 across all our queries, has one of the most thorough comparison hubs in the category i.e., dedicated pages for every major competitor, each structured around the exact moment a buyer is deciding.

Source: Asana

But some brands have taken it further. They built pages that AI platforms actively pull from when constructing answers. Let’s look at ClickUp.

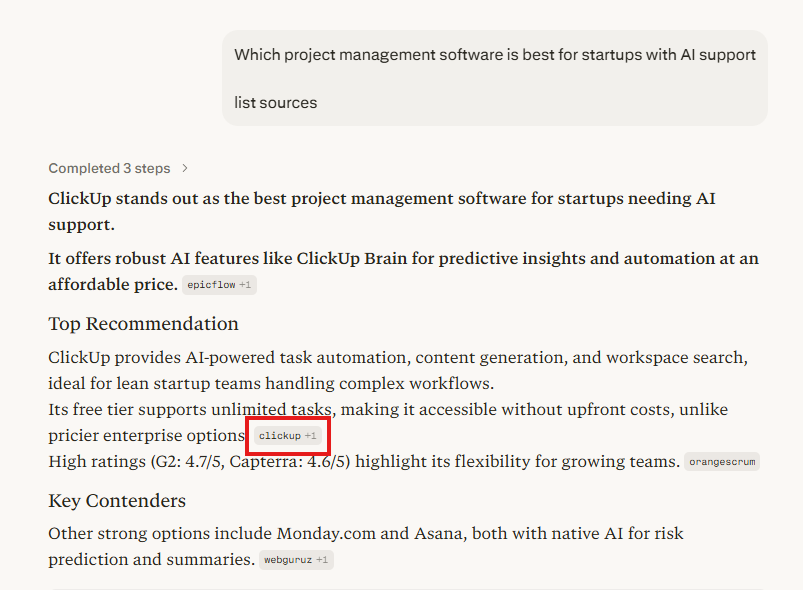

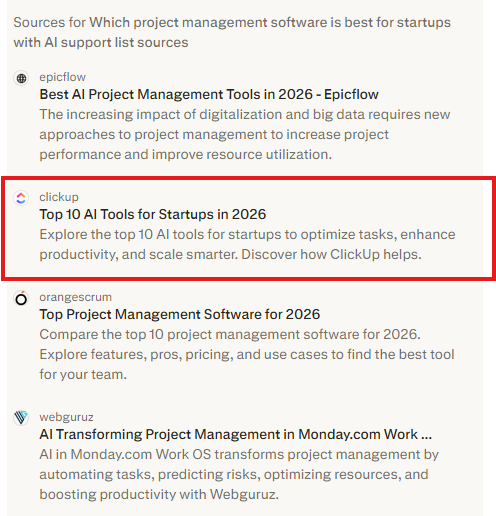

When we ran the query “which project management software is best for startups with AI support,” ClickUp didn’t just show up randomly in the list. Rather, Perplexity named ClickUp as the top recommendation, and the citation pointed directly back to a ClickUp blog post: Top 10 AI Tools for Startups in 2026.

Source: Perplexity

The cited page is a listicle covering the whole category, with ClickUp positioned naturally within it. Perplexity used it as a source to build its answer, which means ClickUp wasn’t just being recommended; it was being consulted.

Source: Perplexity

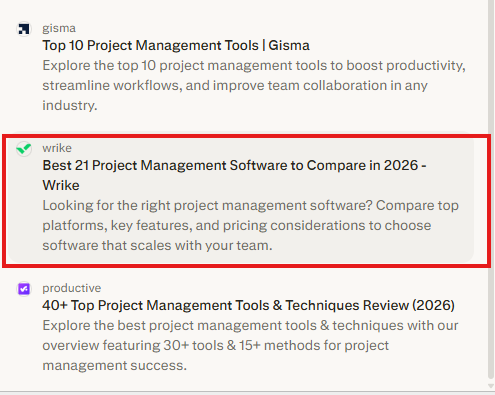

Wrike does the same. Their Best Project Management Software to Compare in 2026 was pulled as a cited source in Perplexity, alongside third-party publications like Gisma and Productive.

Source: ChatGPT

Monday.com takes it a step further. It’s Software for Project Managers: Best Solutions to Use in 2026 appeared twice in the same Gemini source panel, above Business Wire – a single page, cited twice, for the same query.

Source: Gemini

At this stage, comparison and “best of” lists do two things at once. They put you straight onto the buyer’s radar AND turn your website into the primary source any LLM grabs when it’s trying to build an answer.

Third-party review sites like G2 and Capterra exist in that same space, sure, but they cover hundreds of tools, and you don’t have any control over them. It’s your own “best of” pages that can make a more focused and authoritative case for exactly where your product fits.

Concurate Recommends

If you don’t have a “best [your category] tools” page on your own domain, that’s the first gap to close. But the structure of that page matters as much as its existence.

Lead with a clear evaluation framework, like what criteria should a buyer use to choose a PM tool? Then cover each tool, including competitors, with enough specificity that the page is genuinely useful to someone making a decision.

You can also add a comparison table, include use-case callouts and testimonials. The goal here is to have a complete and useful answer to that query that exists anywhere on the web.

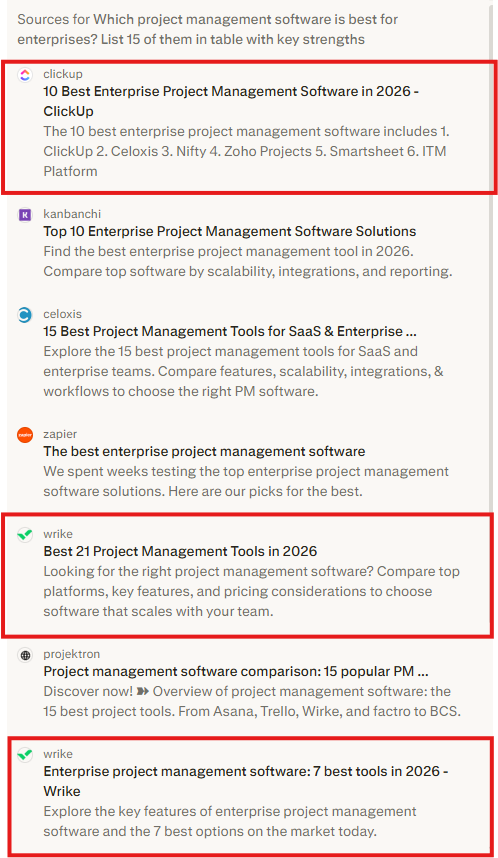

Scaling Content across Categories or Use Cases

You know how publishing one strong listicle is tactically effective for your project management tool. Now, imagine using this tactic across every buyer context or use case. Exponential, right? That’s how you ensure that your brand appears across every query type.

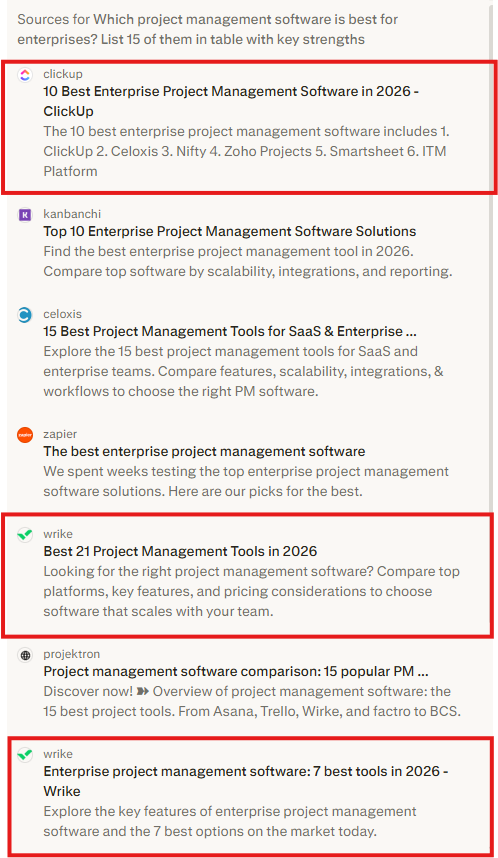

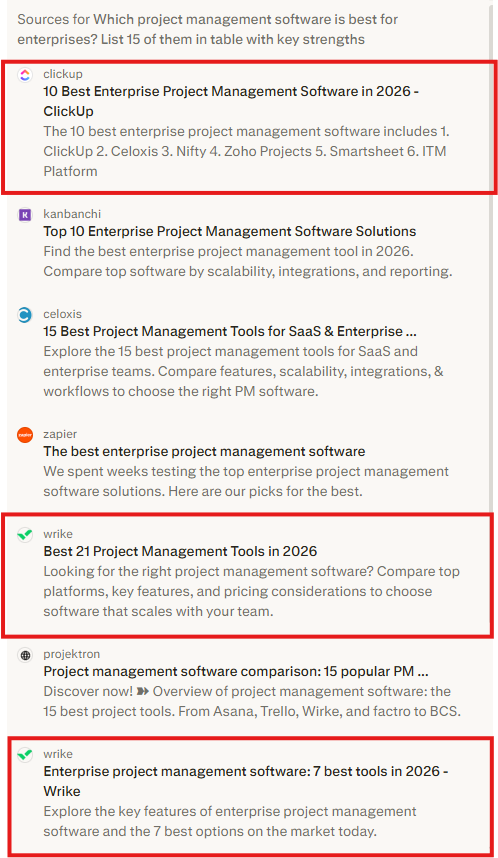

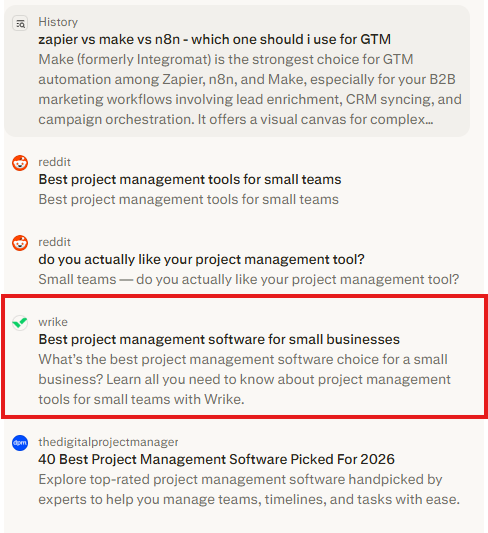

Wrike is the clearest example of this. When we ran the enterprise query, Wrike appeared twice in the same Perplexity source panel. Once for their broad listicle and another time for their enterprise piece.

Source: Perplexity

Wrike earned two citation slots in the same AI answer because they had two pages built for two slightly different versions of the same query. Their small business page, Best Project Management Software for Small Businesses, earned its own citation slot when that query was run.

Source: Perplexity

Monday.com has utilized the same idea from a different angle. Rather than scaling by company size, they have scaled their pages using buyer personas. There’s a dedicated page for project managers, a separate one for startups, and another specifically for enterprise buyers. Each page was cited independently across different queries.

Source: Perplexity & Gemini

The underlying logic is the same across both brands: a buyer searching “best PM tools for enterprises” is not the same buyer as one searching “best PM tools for startups.” Each of them deserves a page that speaks directly to their context, and each of those pages becomes an independent citation point for that specific query.

ClickUp also maps this by query variation. There’s a dedicated post for startups, one for individuals, and one specifically for AI tools for startups. Each targets a different entry point. When any of those queries gets asked, there’s a ClickUp page waiting for it.

Concurate Recommends

Before building new content, look at what you already have. Most SaaS brands have one general “best tools” post, trying to serve every buyer at once. That’s a good starting point.

The next step is to break that into focused, standalone pages, one per segment, company size, or use case that matters to your buyers. That’s right, a programmatic approach is the right answer here. This way, you are making each piece of content earn its place in a specific answer rather than trying to rank for everything at once.

Timing Content to a Market Moment

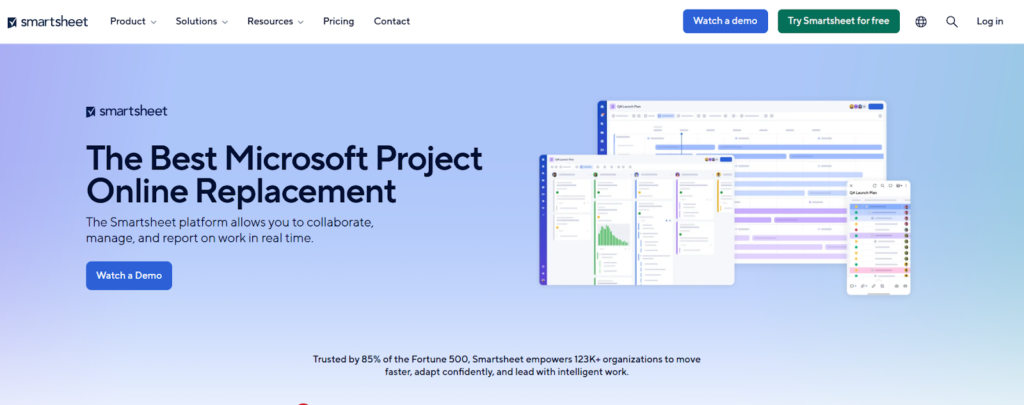

This one was truly interesting and when we noticed it, we applauded Smartsheet’s strategy.

While other four brands on this list built their visibility through consistent content execution over time, Smartsheet did something different. It identified a specific, time-bound moment in the market and built a content strategy around it.

Microsoft Project Online retires on September 30, 2026; every organization currently using it needs to migrate. This is an active, urgent decision that’s happening right now across thousands of enterprise teams.

Smartsheet saw this moment coming and built content specifically for it. Their Microsoft Project replacement page not only compares the two tools, but also walks buyers through the exact steps to migrate from MS Project to Smartsheet. It answers the question a buyer has at the moment they’ve already decided to leave, not the moment they’re still evaluating.

Source: Smartsheet MS Project Replacement

They also published a broader piece on switching from Microsoft Project that builds the business case for the move, covering workflows, team habits, and implementation planning. A landing page dedicatedly made for the buyer who needs to convince leadership, their team members, and probably themselves too.

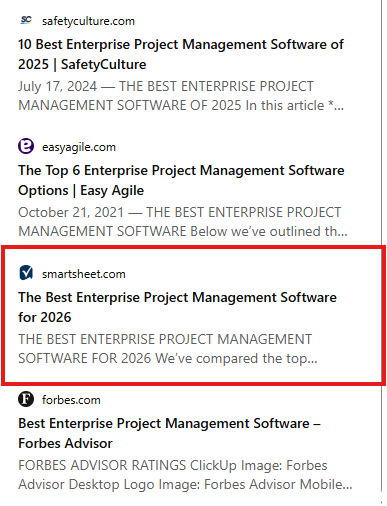

And while this landing page does its job effectively, Smartsheet’s enterprise listicle also appeared as a cited source in Perplexity for the enterprise query, alongside SafetyCulture and Forbes.

Source: Perplexity

This is a fundamentally different content strategy from the others. We love how Smartsheet sensed the stretch in the content and positioning fabric, found a small gap to get their foot in the door, and used it effectively.

They owned one high-intent, high-urgency pain point so completely that when a buyer in that situation turns to an AI platform, Smartsheet is the obvious answer waiting for them.

Concurate Recommends

Every market has moments like this: a competitor sunsetting a product, a changing regulation, a legacy workflow becoming obsolete, a platform shifting pricing, etc. Don’t wait for these moments to happen and then write a reactive blog post.

Instead, identify the next one before it peaks and build a content cluster around it early. A migration guide, a comparison page, and a business case template, each one targeting a different stage of the buyer’s decision during that window. So, by the time the moment arrives, your content is already indexed, waiting to be cited.

Deep Audience Ownership

Each brand on this list has built visibility by going broad, covering all kinds of query types, more buyer segments, and most use cases. But Jira did the opposite. It went deep, owning one specific audience completely.

Visit Jira’s website, and you’ll notice it doesn’t have a “best project management tools for small teams” post, no attempt to chase the general PM buyer. Its comparison hub is deliberately narrow – Azure DevOps, Linear, Pivotal Tracker, developer-adjacent tools. Every page speaks to one specific buyer: the engineering team lead evaluating project management for a software development workflow.

This depth is what likely earns Jira a citation every time a query has a technical or engineering dimension, especially in enterprise and startup contexts.

There’s an important contrast here too. Look at how Gemini handled Trello in the same set of queries:

Source: Gemini

“If you want Dead Simple: Go with Trello,” it states.

At first, it looks like Trello’s hit a ceiling. But when you look closely, you realize it’s a strategic move and Trello’s narrow identity is deliberate. Trello and Jira are both Atlassian products, and they are splitting the market on purpose – one owns the entry point, the other owns depth.

The buyer who starts with Trello and outgrows it doesn’t leave Atlassian; they move to Jira. The funnel is built into the positioning, and the AI reflects this through its recommendations.

Concurate Recommends

Deep audience ownership works when the audience is genuinely distinct and large enough to sustain it. For most PM tools sitting outside the top 7, the temptation is to go broad and chase every possible buyer.

But the right way to do this is to ask: which one buyer type, if we owned them completely, would pull us into every query that includes them? Build content exclusively for that buyer, their language, their workflows, their evaluation criteria, and let that depth do the work that breadth can’t.

You’ve built the product. Now make sure AI recommends it.

You’re already doing the hard parts right – invested in a great product and maybe you are getting ranked in top search positions too. But when a buyer asks ChatGPT or Perplexity AI for the best tools in your category, not having your name in the conversation is not only frustrating, but it’s also hurting your pipeline.

Buyers coming from AI platforms are nearly 3x more likely to convert than those arriving from traditional search. That’s because instead of clicking links, buyers are getting a list of ‘narrowed’ options that fit their purpose.

This is exactly where we at Concurate have built its expertise. We help you identify the gaps, build the right content, and turn your SaaS website into a source that AI platforms can rely on. Sure, you can follow the strategies discussed in this blog with your in-house team, but it will take time, resources and constant supervision to get this right.

Or you can partner with us at Concurate. We have already shown results for our enterprise cybersecurity client, who are now busy handling their 100+ inbound leads through organic content.

You can book a free strategy call, just to get a feel of things, identify your weak spots, and see how we can help you reach your AI vsisbility goals.

Disclaimer: This article is based on publicly available information from agency websites, case studies, and third-party platforms. The evaluation reflects our independent analysis, and we recommend checking each agency’s website or speaking with their team for the latest details on services, pricing, and results.

FAQs

1. Is there a difference between being recommended by an AI and being cited as a source? Which one matters more?

Both matter, but they’re not the same thing. Being recommended means the AI names your product in its answer. Being cited as a source means the AI used your content to build that answer. The latter is harder to earn and more durable; it means the AI treats your domain as an authority on the category, not just a product it’s heard of.

2. Our PM tool is smaller and less established than the brands on this list. Can this still work for us?

Yes, this works for smaller brands as well as it does in enterprises. The brands on this list didn’t build this overnight. More importantly, AI platforms don’t weigh domain authority the same way Google does. A well-structured, specific page that directly answers a buyer’s query can earn a citation slot even if your overall domain authority is lower. The advantage of being smaller is that you can go narrower faster, picking one buyer segment, one use case, one market moment, and owning it completely before the larger brands notice.

3. Should we build new content for AI visibility, or can we repurpose what we already have?

You don’t need to build new content from scratch if you already have a general “best tools” post, a few use-case pages, and some comparison content buried in the blog. Audit what you have and then restructure it with clearer headers, dedicated URLs per segment, and proper internal linking between list content and comparison pages. Create new content only for those gaps that restructuring can’t fix.

4. How long before we start seeing results in AI citations?

There’s no fixed timeline. However, brands with consistent, well-structured content across multiple query variations start showing up in AI source panels meaningfully faster than brands with sporadic or unfocused content within weeks. The compounding effect takes longer, but the first results come faster than most teams expect when handled expertly.

5. We already have comparison pages. Why aren’t they showing up as sources in AI answers?

This is the most common gap we see. Having comparison pages isn’t enough; where they live on your site and how they’re structured matter just as much. Comparison content buried in a blog subdomain, thin on specifics, or not internally linked to related content don’t perform well in AI citation panels as compared to dedicated, standalone pages with clear structure, specific feature breakdowns, and context a buyer can act on.