| TL;DR: AI platforms don’t recommend endpoint security vendors randomly. We tested real buyer queries across ChatGPT, Gemini, Claude, and Perplexity and found that a small group of vendors shows up consistently, regardless of how the question is framed. The reason isn’t just brand or budget. It’s how they’ve built content ecosystems, covered query variations, and positioned themselves across decision-stage moments. If your product isn’t appearing in these AI answers, you’re likely missing from shortlists altogether. At Concurate, this is exactly the gap we help close by building content and visibility strategies that get you picked, not just ranked. |

You heard somewhere that adding FAQs to your webpages helps you get cited in LLMs, so you added them. That didn’t work.

Then you read that structured content helps, so you went back and restructured your pages and blogs with on-point headers, tighter definitions, and clearer formatting.

Still nothing.

Here’s the most frustrating part: There’s no guide to help you crack this because AI platforms don’t publish their ranking logic. There’s no playbook like the ‘Search Console’ for ChatGPT, Gemini, Perplexity, and Claude; you can’t see why some vendors (read: your competitors) get recommended, and you don’t.

The fact is ranking on Google and appearing in AI recommendations are two completely different games, and has nothing to do with how good your product is.

And in a category as competitive as endpoint security, that gap is costing you deals you don’t even know you’re losing.

So we stopped waiting for a playbook and started looking for patterns.

We donned Sherlock Holmes’ hat, picked up a magnifying glass, and followed the trail (read: prompts) to understand the inner workings of these recommendations.

Here’s what we found:

Our Selection Process For Identifing the Vendors Appearing Across AI Platforms

Before we get into the details, let’s take a quick look at how we processed the data.

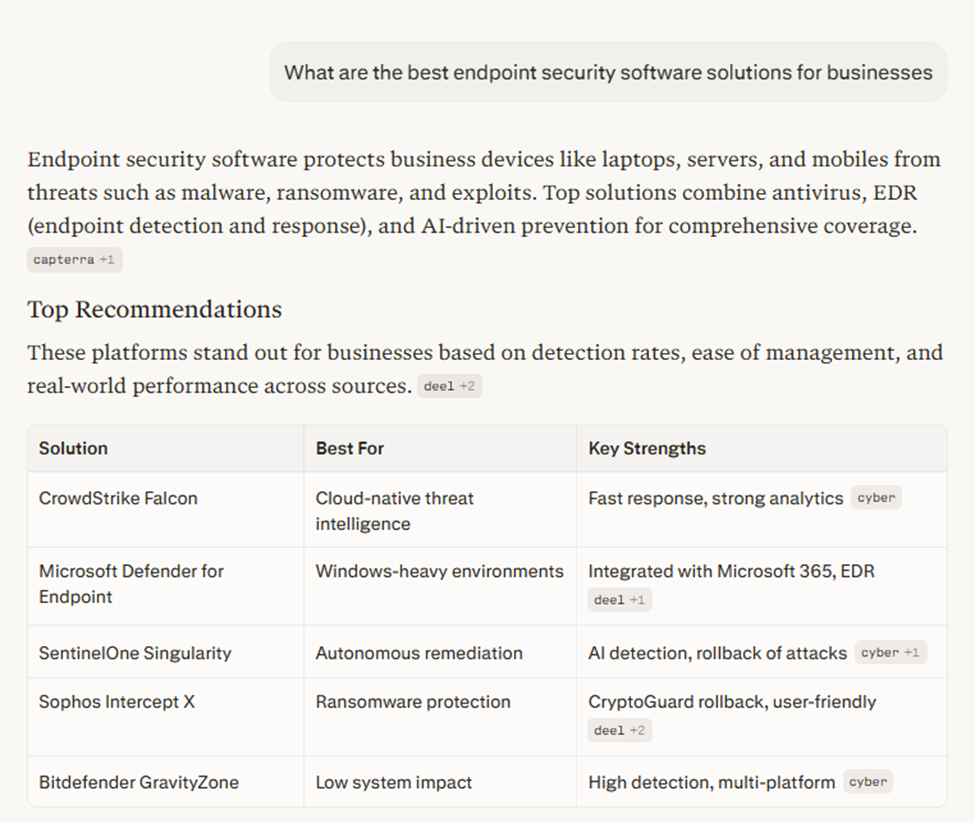

To get to the shortlisted names for endpoint security software, we ran four queries across ChatGPT, Gemini, Claude, and Perplexity:

- What are the best endpoint security software solutions for businesses

- Recommend top endpoint protection platforms with AI capabilities for mid-sized companies.

- Which vendors are leaders in endpoint security solutions

- best endpoint security software for enterprises

Here’s a snapshot from our search:

Source: Perplexity

You see, we understand that not all buyers search the same way. That’s why we ran a couple of queries that covered a broad spectrum of searches – giving us the vendors that dominate the category, and a couple of specific high-intent queries that identified vendors when the stakes were high.

Then, we recorded every vendor that each platform recommended and compared the results across all four AI platforms to spot which names kept coming up regardless of how the question was being asked.

This list is what we ended up with.

Top 7 Endpoint Security Software Recommended by ChatGPT, Claude, Perplexity, and Gemini

Across the four platforms and four queries, 15 unique endpoint security vendors came up at least once. Some names appeared once and didn’t show up again – like McAfee and Trellix.

But some were recommended so consistently that it almost didn’t matter how the question was framed. The seven vendors that kept surfacing across broad queries, enterprise-specific ones, and questions focused on AI capabilities alike, were:

- CrowdStrike Falcon

- Microsoft Defender for Endpoint

- SentinelOne Singularity

- Palo Alto Networks Cortex XDR

- Sophos Intercept X

- Trend Micro Vision One

- Bitdefender

Here’s how the numbers looked:

Endpoint security vendors that got recommended across AI platforms

These are based on 4 queries across ChatGPT, Claude, Perplexity, and Gemini

| Vendor | ChatGPT | Claude | Perplexity | Gemini | Total |

| CrowdStrike | 4 | 4 | 4 | 4 | 16 |

| Microsoft | 4 | 4 | 3 | 4 | 15 |

| SentinelOne | 4 | 4 | 3 | 4 | 15 |

| Trend Micro | 4 | 4 | 3 | 4 | 15 |

| Palo Alto Networks | 4 | 3 | 3 | 4 | 14 |

| Sophos | 4 | 3 | 3 | 4 | 14 |

| Bitdefender | 4 | 3 | 3 | 3 | 13 |

CrowdStrike was the only vendor with a perfect score across all four platforms. Microsoft, SentinelOne, and Trend Micro followed closely.

Below Bitdefender, the drop-off was sharp. Vendors like Cisco and Huntress showed up occasionally but never with the same regularity.

The reason we are focusing on the frequency here is because it gives a deeper analysis of the vendor. Getting mentioned across AI platforms shows how deeply a vendor’s content and brand signals are embedded in the LLM’s training data and retrieval patterns.

The more consistently a vendor appears, regardless of how the question is framed, the stronger their topical authority signal is. In this case, CrowdStrike is acing the ‘don’t show up just once’, but show up every time’ game.

Why Were These Endpoint Security Vendors Getting Cited So Often Across AI Platforms

We dug deeper because, beyond the frequency, we wanted to understand what the driving factors were for these recommendations.

Interestingly, we noticed that AI platforms weren’t just dropping these names; they were recommending each vendor for a distinct reason.

For example, CrowdStrike was mentioned for speed and scale, SentinelOne for automated threat response, Microsoft Defender for organizations already running Microsoft 365, Sophos for centralized management, and so on.

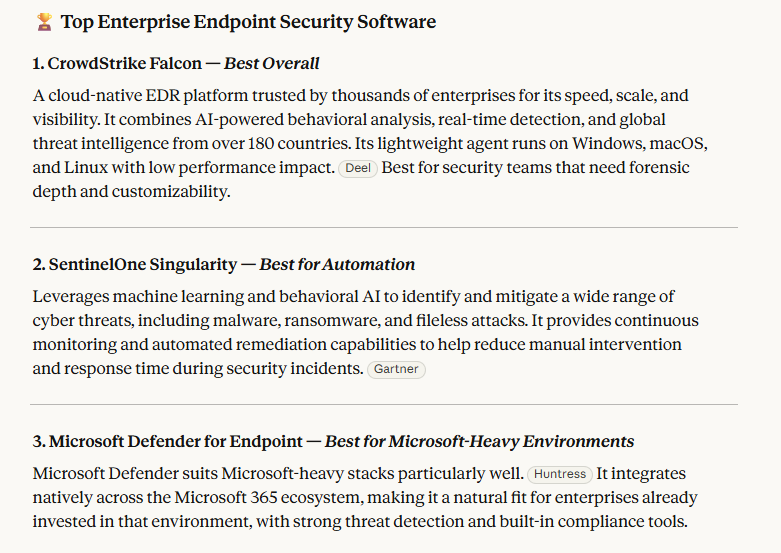

Source: Claude

Notice the pattern here? Different wording, different formatting, maybe even different ordering, but the vendors and the reasons behind them remained remarkably stable.

It became clear that each vendor owned a distinct position in the AI answers, which tells you something about how these companies have shaped their presence and messaging over time.

We weren’t chalking this kind of consistency to coincidence. This kind of consistency points to a very specific (strategic) process these vendors have followed consistently to establish a clear, recognizable identity in the market that the AI platforms have absorbed and now reflect back to buyers.

Which led to our next quest: what exactly did they do to get there?

Why Do These Seven Vendors Stand Out?

We wondered: what separates these seven vendors from the rest of the pack?

It’s not just budget or the brand age – several well-known endpoint security companies like McAfee and Trellix didn’t make this list.

However, these seven have made every effort, very deliberately, to how they show up on the web. We are not talking about just their websites (although that’s a big part of it), but also across every surface where a buyer might be researching endpoint security, and where an AI platform might be crawling for a credible answer.

They’ve created content that other people reference, link, and cite independently. That accumulated signal is what AI platforms pick up on, and it compounds over time.

How? Allow us to show some examples:

Build Websites That Are Content Ecosystems

What do most endpoint security solutions’ websites have? A product page, a few blogs, maybe a resources tab, and sometimes a Whitepaper too, right? That’s the bare minimum, but there’s more to it.

They have built websites that double up as content ecosystems. They have sprawling libraries of educational resources, research, and reference material that exist to answer questions buyers and practitioners are actively asking, apart from selling their product.

This is critical for AI visibility because AI platforms cite the most informative content. What adds to this is when more and more websites link to that page, AI platforms trusts it as a credible source.

CrowdStrike does this beautifully. It has created an ‘Adversary Hub’ which is a dedicated portal tracking over 265 named threat actors, complete with detailed profiles, attack patterns, and intelligence updates.

Since this is updated regularly, other experts from the industry reference it, cite it, or look to it as a resource. This kind of organic, third-party linking is exactly the signal AI platforms use to determine what’s worth mentioning.

Source: CrowdStrike Advisory Hub

A similar pattern is seen in SentinelOne’s Cybersecurity 101 hub, a dedicated section of their website covering hundreds of foundational topics, from what EDR means to how ransomware rollback works.

One would also notice how none of it is a product pitch; rather, it is the kind of educational content that practitioners, students, and buyers search for regularly. When an AI platform needs to answer a basic but important question about endpoint security, SentinelOne’s hub is consistently among the first places it finds a clean, structured answer.

Source: SentinelOne’s Cybersecurity 101

In fact, almost all vendors in our list publish an annual threat report based on original research. It’s a pattern worth tracking. These vendors have built content that exists to be useful and informative – building an ecosystem that works for itself. And because it is genuinely useful, other people link to it, indicating AI platforms trust it enough to cite it.

Answer All Possible Variations of the Buyer’s Query

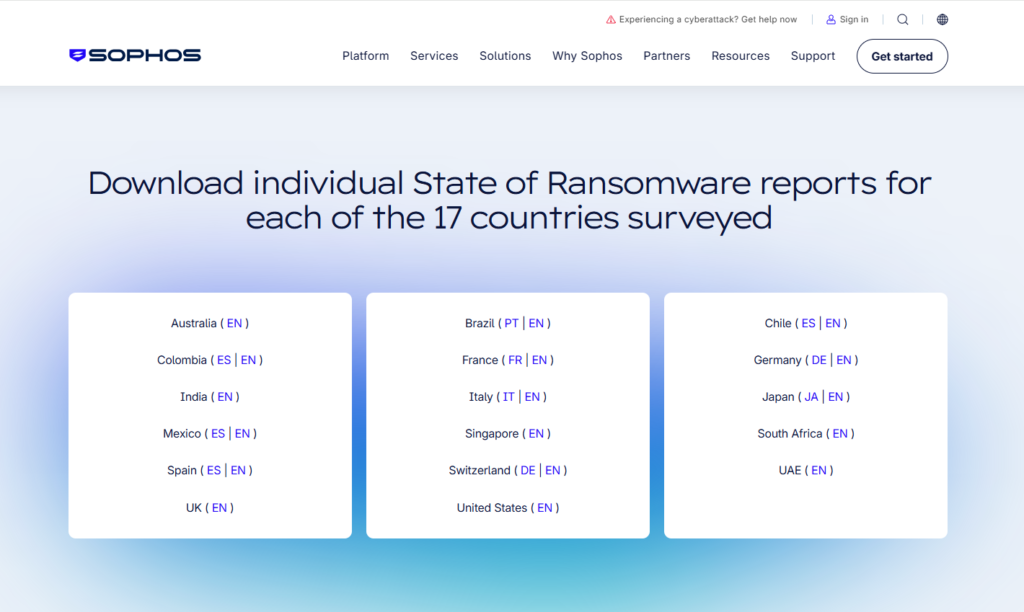

Let’s take a look at how Sophos is distributing its ‘State of Ransomware’ research. Instead of publishing a single report, Sophos broke it down to industry-specific versions: one for manufacturing, one for healthcare, another for financial services, and yet another for enterprise.

Source: Sophos State of Ransomware Report

Each version targets a different buyer searching from a different context: A CISO at a hospital searches differently than a procurement lead at a bank, and Sophos shows up for both.

In fact, they even bifurcated country-wise, for 17 different countries:

Source: Sophos State of Ransomware Report

Creating this kind of meaningful multiple versions of the buyer’s question from the same report targets different audience groups and builds consistent AI visibility.

But there’s another layer to this (and why this works beyond just matching search queries).

AI platforms like ChatGPT, Gemini, Perplexity, etc. don’t analyze queries in terms of ‘keywords’, they work on semantic clusters. So, when a vendor publishes content that covers EDR, ransomware, threat hunting, zero-day attacks, incident response, MITRE ATT&CK, and endpoint detection across dozens of interconnected pages, the AI platform starts associating that brand with the entire topic neighborhood, not just one corner of it. The more semantically comprehensive your content is, the more an AI platform treats your brand as an authority on the category itself.

That’s another reason why SentinelOne’s Cybersecurity 101 hub wins the recommendation list.

This means, if you are an endpoint security vendor, you can create content libraries around use cases and industries like:

- Endpoint security for healthcare organizations

- Endpoint security for remote workforces

- Endpoint security for OT environments

This is where programmatic SEO becomes genuinely powerful: You develop a framework for systematically covering every use case, industry, buyer persona, and threat scenario your product addresses. Deal with it in real depth on each page, and it builds the semantic authority that makes AI platforms trust your brand across the entire category.

Related Read: 5 Strategies Top Cybersecurity Companies Use to Win AI Search Visibility (And How You Can Do It Too)

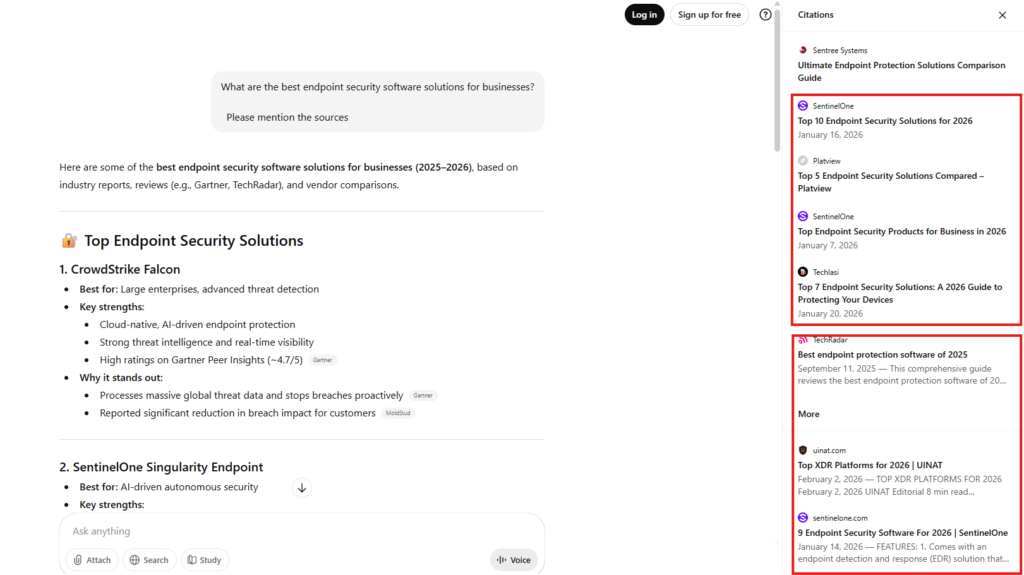

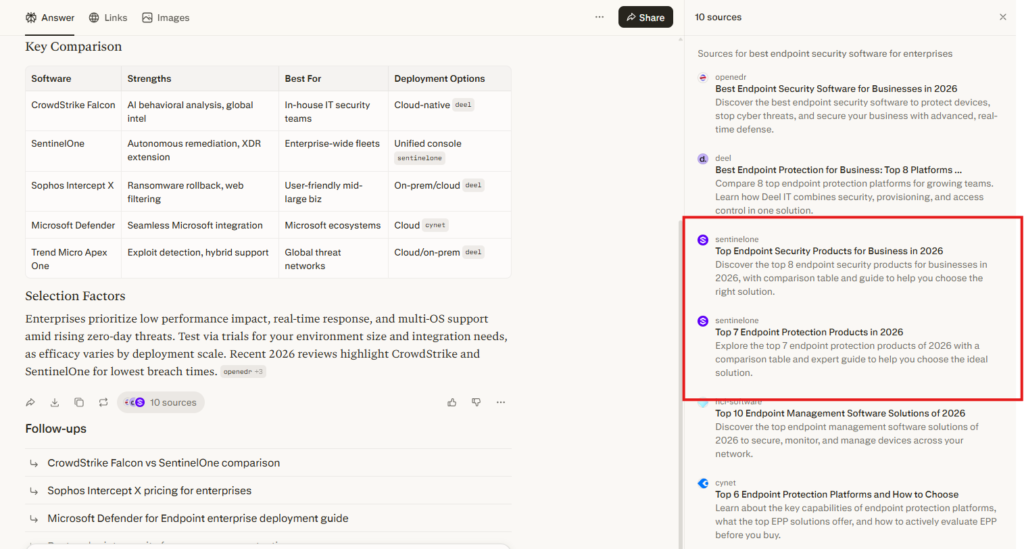

Publish Listicles Around the Exact Queries Buyers Are Searching For

This one feels counterintuitive: Why would you publish a list that sends your buyers to a competitor’s page? But look at what happens behind the curtains when a buyer asks an AI platform about the best endpoint security software for their business.

When we ran this query on ChatGPT and asked it to show its sources, SentinelOne appeared three times in the citations panel. Though it was not recommended as a vendor, but it was being treated as a source that ChatGPT was actively using to build its answer.

Source: ChatGPT

Notice how ChatGPT is pulling from three separate listicles from sentinelone.com itself:

- “Top 10 Endpoint Security Solutions for 2026”

- “Top Endpoint Security Products for Business in 2026” and

- “9 Endpoint Security Software For 2026”

We identified the same pattern on Perplexity. Two SentinelOne list pages appeared among the 10 sources cited for the same query, sitting alongside independent sources like TechRadar and Openedr.

Source: Perplexity

SentinelOne is appearing here as an authority AI is consulting to build the answer. That’s a fundamentally different level of visibility, and it comes entirely from publishing well-structured list content that directly matches what buyers are searching for.

The reason this works is simple. When a buyer asks AI surfaces: “What are the best endpoint security tools?” the AI looks for pages that comprehensively answer that exact question. A structured listicle with clear vendor descriptions, use cases, and a comparison table is precisely the format it needs.

As an endpoint security software vendor, if you publish such pages, there’s a high chance of you getting cited not just as a product in the list, but also as the source behind it.

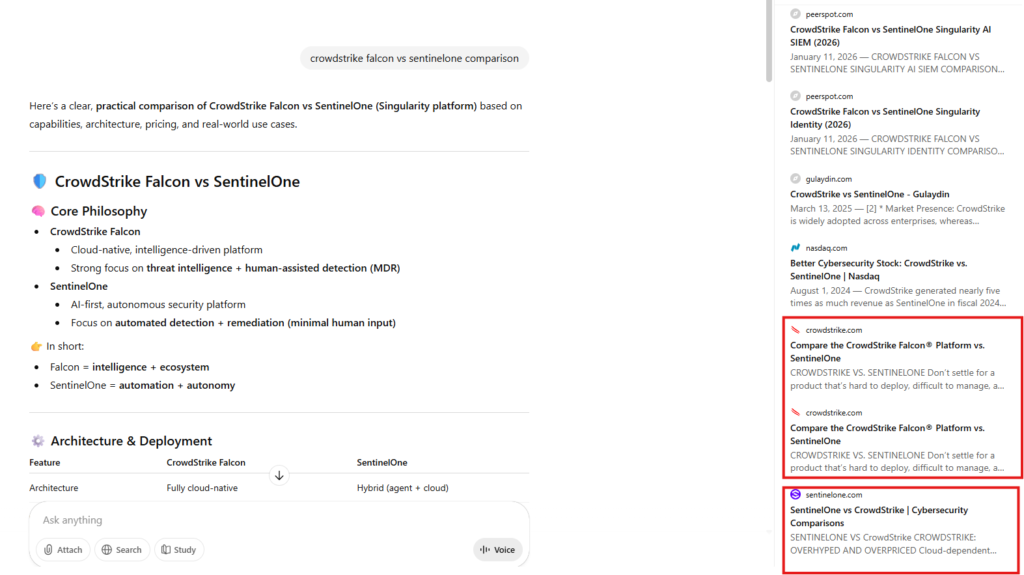

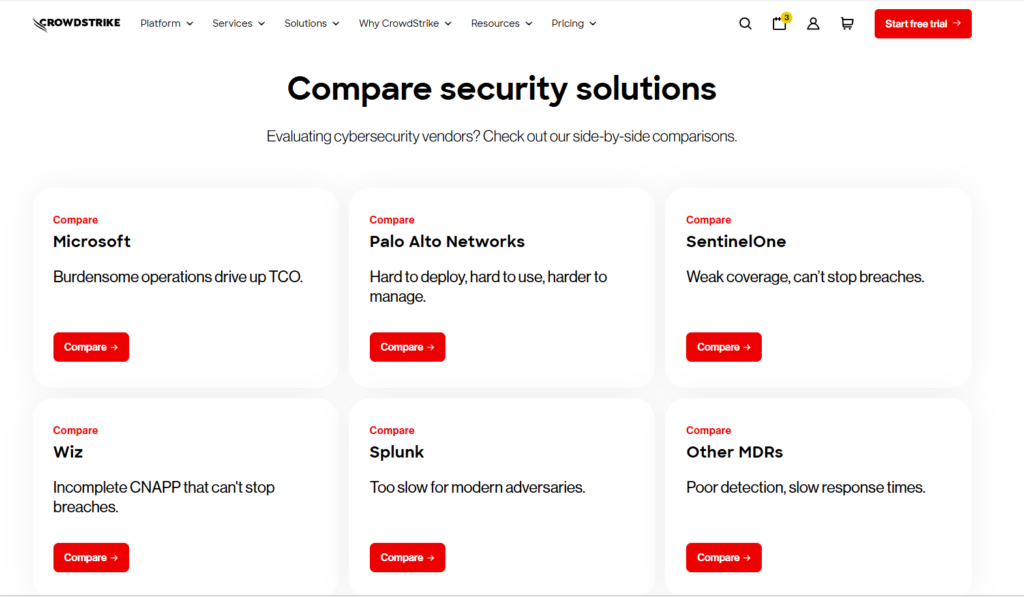

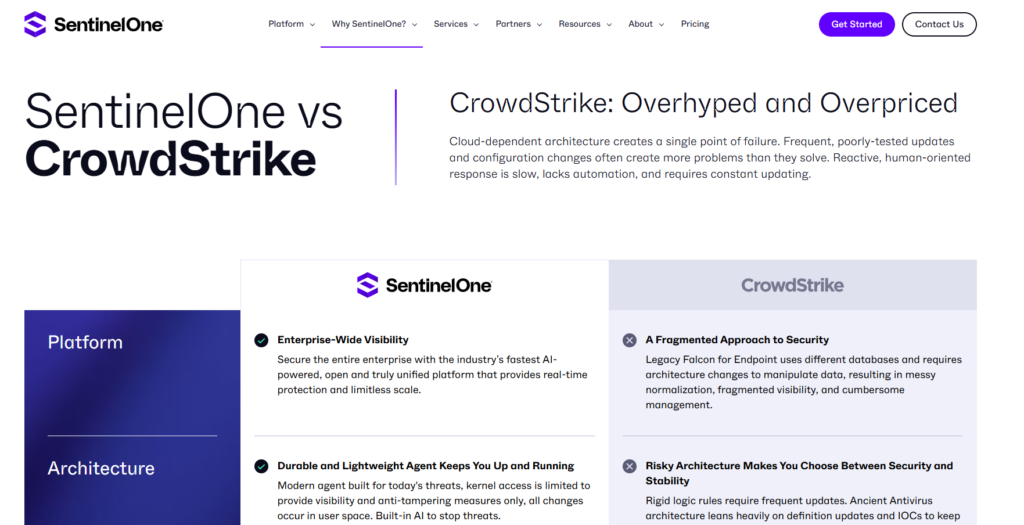

1:1 Comparison Content for When Buyers Are Close to Deciding

Once the listicles have done their job and buyers have narrowed down their choices to only a couple of options, that’s when 1:1 comparison pages come into play.

So when a buyer is searching “your product vs your competitor,” who can better explain your product than yourself?

A mistake we see most vendors make is that they leave the 1:1 comparison to third-party review sites like G2 or PeerSpot. But that’s not helping based on the results we saw in our experiment.

When we ran “CrowdStrike Falcon vs SentinelOne comparison” on ChatGPT, the citations panel gave us this:

See the two pages from CrowdStrike.com itself? And one from sentinelone.com?

Both of these are appearing directly as sources ChatGPT was pulling from to build its answer.

This happens because both vendors have invested in dedicated comparison pages built specifically for this moment. CrowdStrike runs an entire comparison hub with individual pages for each major competitor.

Source: CrowdStrike

SentinelOne does the same with its own comparison pages, including a dedicated “SentinelOne vs CrowdStrike” page that breaks down architecture, performance, and platform capabilities side by side.

Source: SentinelOne

These pages serve two purposes at once:

- For the buyer, they answer the exact question being asked at the exact moment it’s being asked.

- For AI platforms, they’re a structured, authoritative source for any comparison query involving these vendors.

That’s the reason you’ll see this being done by other top vendors like Palo Alto and Trend Micro as well. So, when a buyer turns to an AI platform to help them decide, you, as a vendor, have already shaped your answer.

Want to Be on the AI Shortlist? That’s Exactly the Work We Do at Concurate

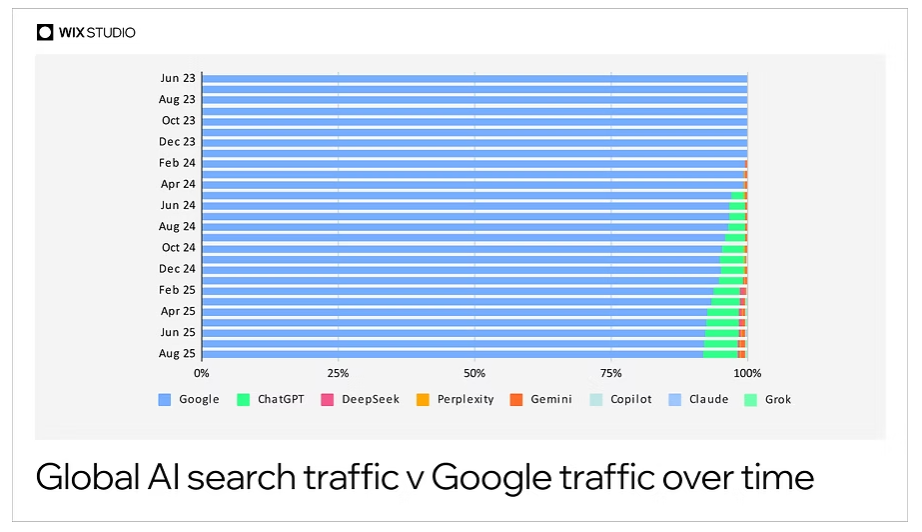

Look at this visual. According to Wix Studio’s AI Search Lab, AI search has seen average year-over-year traffic increases of over 416%, with the ratio of Google users to AI search users narrowing from 10:1 in 2024 to 4.4:1 in August 2025. That’s how fast the shift is happening.

Source: Wix Studio Survery

Every month, that gap is closing. Building this kind of visibility needs patience and a strategic approach. Creating an entire content ecosystem, mapping semantic topic clusters, publishing structured listicles, and developing a full comparison content hub requires dedicated focus, deep category understanding, and a clear execution plan that most stretched marketing teams simply don’t have bandwidth for.

But, at Concurate, this is exactly the work we do.

For instance, one of our enterprise cybersecurity clients wanted to build their organic visibility across both Google and AI platforms. They wanted to focus on the right content, structured around how their buyers actually research and evaluate solutions. Within six months, the work we did for them drove 100+ inbound leads entirely through organic content.

We understand the buyer’s journey, the questions they ask, the comparisons they run, and the queries they type into AI platforms in the middle of their lunch before a vendor shortlist meeting. That understanding is what lets us build content that shows up in those exact moments.

If you think your product deserves to be on the shortlist when a buyer asks an AI for endpoint security recommendations, and it isn’t showing up yet, that’s a gap worth closing now. For that, you need the right generative engine optimization agency for your cybersecurity company. That’s us.

Book a call with us to map out exactly where you stand and what it would take to get there.

Frequently Asked Questions

1. Why does my endpoint security product not show up in ChatGPT or Perplexity recommendations even though it ranks on Google?

Google and AI platforms use different signals. Google rewards backlinks and on-page SEO. AI platforms look for content that is semantically comprehensive, clearly structured, and widely referenced. A product can rank well on Google and still be invisible to an AI platform if it hasn’t built the topical depth LLMs associate with category authority.

2. How long does it take to start appearing in AI recommendations?

There’s no fixed timeline, but vendors who execute consistently across content ecosystems, structured pages, and listicles typically start seeing measurable AI visibility improvements gradually.

3. Do we need to create separate content specifically for AI platforms, or does our existing content work?

Your existing content can work, but likely needs restructuring. Adding clear headers, FAQ sections, and comparison tables to existing pages can meaningfully improve how AI platforms absorb and cite your content.

4. Is programmatic SEO safe for endpoint security brands, or does it risk looking spammy?

It is risk only when it produces thin, templated content. Done right, it’s a systematic way of covering every meaningful use case and buyer scenario your product addresses, with genuine depth on each page. For instance, “endpoint security for healthcare” or “EDR for remote workforces” pages that answer real questions specific buyers are asking.

5. How do we measure whether our content is getting cited by AI platforms?

Run your target queries directly across ChatGPT, Perplexity, Claude, and Gemini, and note whether your brand or content appears in responses or source citations. Also track unexplained spikes in branded search volume, which often correlate with AI-driven discovery. Dedicated GEO tracking tools are emerging, but manual query monitoring remains the most reliable method for now.